Best practices for secure AI service adoption in business

It's an extremely powerful, revolutionary, and now immensely popular tool. Understanding the basics of AI is essential for using it critically and to your advantage

Artificial Intelligence is on everyone’s lips and screens.

Its arrival represents a monumental shift for businesses, offering immense opportunities for growth and optimization.

It is developing at high speed and constantly accelerating, particularly since the major players have intensified competition and broadened the playing field – by integrating Gemini into the Google Workspace Business productivity suite, Copilot into Microsoft 365 Personal and Family plans, launching AI Overview and Search GPT, etc. – making this extremely powerful tool something widely accessible to anyone with an internet connection.

As with any tool – especially one so revolutionary and widespread – its use also carries significant risks, particularly in terms of protecting company information assets.

The adoption of artificial intelligence is not just a technological matter, but also a cultural and legal one.

Adopting AI requires a conscious and structured approach so that it truly becomes a driver for growth and doesn’t backfire.

True to our mission to support companies in adopting technologies securely and strategically, here is a framework of key best practices for businesses preparing to use AI tools.

-

Know the fundamentals of AI to use it critically

You don’t need to reinvent yourself as a data scientist, but it is essential to have a basic understanding of how AI models work to use them critically and consciously. -

DO NOT insert or allow access to sensitive or proprietary data

AI services such as the free versions of ChatGPT, Gemini, Perplexity, and Copilot often use user prompts to further train their models.

This means that the data you enter – product ideas, business strategies, data covered by NDAs, non-public financial data, proprietary source code, etc. – may not remain confidential and could enter future training datasets, becoming accessible to third parties.

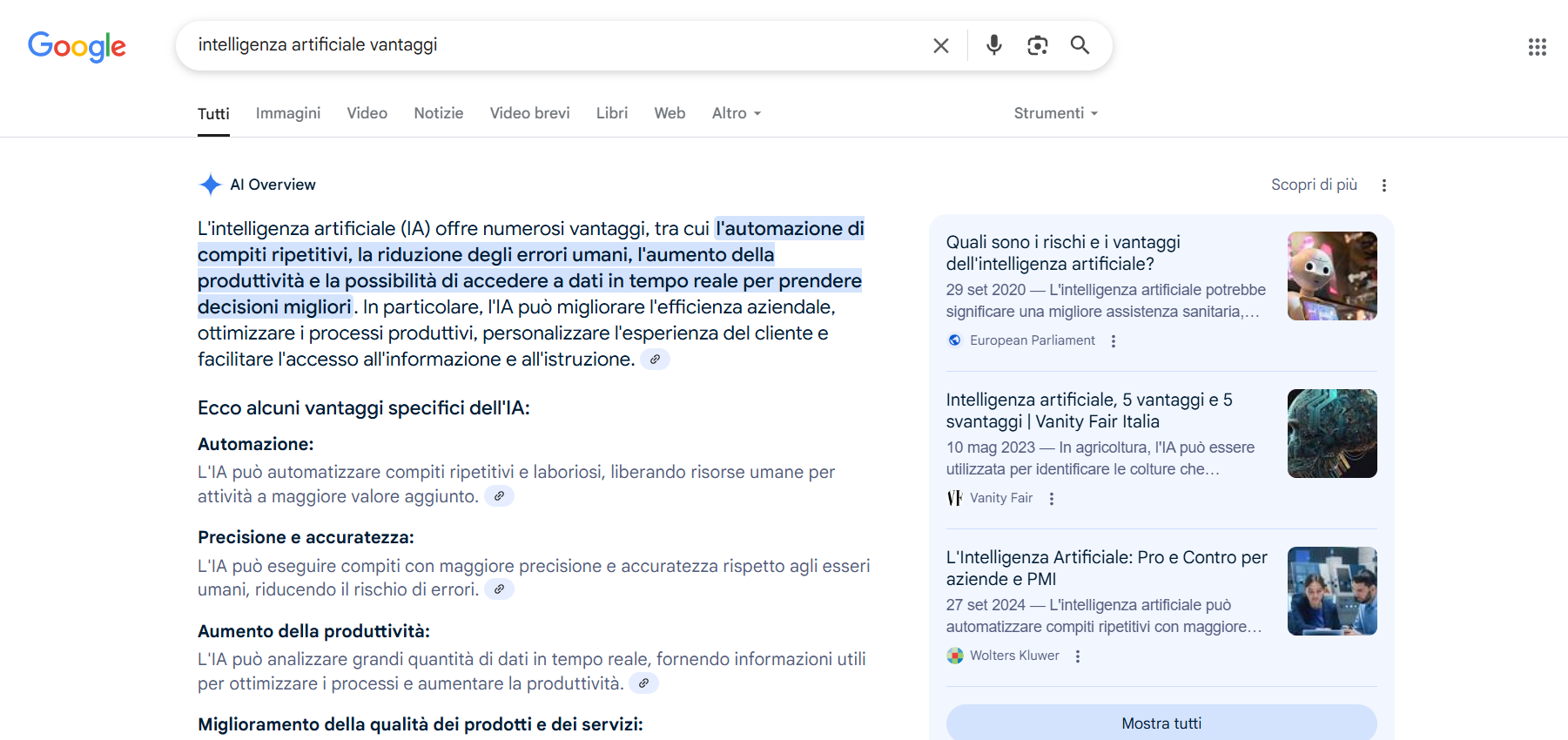

Furthermore, consider that the interfaces for accessing artificial intelligence are already numerous and not limited to stand-alone chatbot apps: extensions and plug-ins, integrations in online software and search engines (see Screenshot 1), not to mention the countless mobile applications that integrate AI services and which often require unconditional and uncontrolled access to the data contained on mobile devices.

Screenshot 1. AI Overview integrated in Google Search

-

Carefully read the Terms of Service and Privacy Policies of the AI services you intend to use

Before using any AI tool, it is essential to understand how the provider handles the data provided to it.

Where is the data stored? How long is it retained? Is it used for training? Is it shared with third parties? Most free services offer few guarantees of confidentiality for the data entered.

For your convenience, below are the links to the Terms of Service and Privacy Policies of the main AI providers:

Open AI, Data usage for Chat GPT

Open AI, Europe Privacy Policy

Google, Gemini Apps Privacy Hub

Google, Generative AI in Google Workspace Privacy Hub

Google, Gemini Safety Center

Microsoft, Copilot AI Experience Terms

Microsoft, Data, Privacy and Security for Microsoft 365 Copilot

Microsoft, Data, privacy, and security for web search in Microsoft 365 Copilot and Microsoft 365 Copilot Chat

Microsoft, AI security for Microsoft 365 Copilot

Microsoft, Enterprise data protection in Microsoft 365 Copilot and Microsoft 365 Copilot Chat

Microsoft, Microsoft Privacy Statement – Artificial Intelligence and Microsoft Copilot capabilities

Perplexity, Perplexity Terms of Service

Perplexity, Perplexity Pro for Enterprise | Terms of Service

Perplexity, Perplexity Privacy Policy

Perplexity, Perplexity Security Hub

Perplexity, Perplexity Trust Center

Antrophic, Claude Consumer Terms of Service

Antrophic, Claude Commercial Terms of Service

Antrophic, Privacy Policy

Antrophic, Usage Policy

Antrophic, Trust & Safety Center -

Evaluate private AI solutions or using a controlled cloud environment

Consider adopting enterprise AI services (see Screenshot 2) that offer stronger guarantees regarding data processing, dedicated models, or the option to deploy AI on-premise or on a private cloud.

Screenshot 2. Gemini and Copilot business AI chatbot interfaces

-

Define clear internal usage policies

Establish rules for employees on using AI systems, specifying which tools are permitted and what types of data must under no circumstances be entered into public AI services.

In addition, consider providing specific professional training to your staff on the AI services you plan to use.

While personal data protection falls outside our direct purview, it should be noted that processing via AI introduces new complexities in terms of privacy and, consequently, for GDPR:

-

Evaluate the need for a Data Protection Impact Assessment (DPIA)

If using AI involves a high risk to the rights and freedoms of data subjects, it is mandatory to conduct a DPIA prior to processing. -

Anonymize personal data

Anonymize personal data before providing it to an AI service. This will drastically reduce the risk in the event of a data breach or misuse by the AI provider. -

Inform data subjects

Clearly inform employees, customers, and suppliers if their personal data is processed using AI, about the purposes, the underlying logic of any automated decisions, and their rights.

-

Pay attention to cybersecurity

Integrating AI systems can create new attack surfaces.

Ensure that AI tools are securely integrated into the enterprise IT infrastructure and that cybersecurity best practices are followed. -

Keep an eye on AI regulation at the EU level (e.g., AI Act) and any national implementations

The AI Act is the world’s first attempt to regulate artificial intelligence: its primary objective is to ensure that AI systems used in the EU are safe, respect fundamental rights, and promote innovation, with a risk-based approach. -

Implement human review for critical information and decision-making processes

Generative artificial intelligence models are trained on vast amounts of data that may contain bias (social, racial, gender).

At the same time, they can produce plausible content but completely false or inaccurate (i.e., hallucinations).

It is therefore essential not to blindly trust an AI’s output and to implement human review for critical information and decision-making processes.

Artificial Intelligence is an incredible tool, but its use in business requires an informed and security-based approach.

Ignoring the risks related to data protection and confidentiality can lead to loss of trade secrets, reputational damage, and significant financial losses.

Adopting AI properly means integrating it into a comprehensive company IT strategy that prioritizes security, compliance and data governance.